Fix#31395

This regression is introduced by #30273. To find out how GitHub handles

this case, I did [some

tests](https://github.com/go-gitea/gitea/issues/31395#issuecomment-2278929115).

I use redirect in this PR instead of checking if the corresponding `.md`

file exists when rendering the link because GitHub also uses redirect.

With this PR, there is no need to resolve the raw wiki link when

rendering a wiki page. If a wiki link points to a raw file, access will

be redirected to the raw link.

Fix#31271.

When gogit is enabled, `IsObjectExist` calls

`repo.gogitRepo.ResolveRevision`, which is not correct. It's for

checking references not objects, it could work with commit hash since

it's both a valid reference and a commit object, but it doesn't work

with blob objects.

So it causes #31271 because it reports that all blob objects do not

exist.

Support compression for Actions logs to save storage space and

bandwidth. Inspired by

https://github.com/go-gitea/gitea/issues/24256#issuecomment-1521153015

The biggest challenge is that the compression format should support

[seekable](https://github.com/facebook/zstd/blob/dev/contrib/seekable_format/zstd_seekable_compression_format.md).

So when users are viewing a part of the log lines, Gitea doesn't need to

download the whole compressed file and decompress it.

That means gzip cannot help here. And I did research, there aren't too

many choices, like bgzip and xz, but I think zstd is the most popular

one. It has an implementation in Golang with

[zstd](https://github.com/klauspost/compress/tree/master/zstd) and

[zstd-seekable-format-go](https://github.com/SaveTheRbtz/zstd-seekable-format-go),

and what is better is that it has good compatibility: a seekable format

zstd file can be read by a regular zstd reader.

This PR introduces a new package `zstd` to combine and wrap the two

packages, to provide a unified and easy-to-use API.

And a new setting `LOG_COMPRESSION` is added to the config, although I

don't see any reason why not to use compression, I think's it's a good

idea to keep the default with `none` to be consistent with old versions.

`LOG_COMPRESSION` takes effect for only new log files, it adds `.zst` as

an extension to the file name, so Gitea can determine if it needs

decompression according to the file name when reading. Old files will

keep the format since it's not worth converting them, as they will be

cleared after #31735.

<img width="541" alt="image"

src="https://github.com/user-attachments/assets/e9598764-a4e0-4b68-8c2b-f769265183c9">

Found at

https://github.com/go-gitea/gitea/pull/31790#issuecomment-2272898915

`unit-tests-gogit` never work since the workflow set `TAGS` with

`gogit`, but the Makefile use `TEST_TAGS`.

This PR adds the values of `TAGS` to `TEST_TAGS`, ensuring that setting

`TAGS` is always acceptable and avoiding confusion about which one

should be set.

Fix#31738

When pushing a new branch, the old commit is zero. Most git commands

cannot recognize the zero commit id. To get the changed files in the

push, we need to get the first diverge commit of this branch. In most

situations, we could check commits one by one until one commit is

contained by another branch. Then we will think that commit is the

diverge point.

And in a pre-receive hook, this will be more difficult because all

commits haven't been merged and they actually stored in a temporary

place by git. So we need to bring some envs to let git know the commit

exist.

close #27031

If the rpm package does not contain a matching gpg signature, the

installation will fail. See (#27031) , now auto-signing rpm uploads.

This option is turned off by default for compatibility.

If the assign the pull request review to a team, it did not show the

members of the team in the "requested_reviewers" field, so the field was

null. As a solution, I added the team members to the array.

fix#31764

Fix#31137.

Replace #31623#31697.

When migrating LFS objects, if there's any object that failed (like some

objects are losted, which is not really critical), Gitea will stop

migrating LFS immediately but treat the migration as successful.

This PR checks the error according to the [LFS api

doc](https://github.com/git-lfs/git-lfs/blob/main/docs/api/batch.md#successful-responses).

> LFS object error codes should match HTTP status codes where possible:

>

> - 404 - The object does not exist on the server.

> - 409 - The specified hash algorithm disagrees with the server's

acceptable options.

> - 410 - The object was removed by the owner.

> - 422 - Validation error.

If the error is `404`, it's safe to ignore it and continue migration.

Otherwise, stop the migration and mark it as failed to ensure data

integrity of LFS objects.

And maybe we should also ignore others errors (maybe `410`? I'm not sure

what's the difference between "does not exist" and "removed by the

owner".), we can add it later when some users report that they have

failed to migrate LFS because of an error which should be ignored.

See discussion on #31561 for some background.

The introspect endpoint was using the OIDC token itself for

authentication. This fixes it to use basic authentication with the

client ID and secret instead:

* Applications with a valid client ID and secret should be able to

successfully introspect an invalid token, receiving a 200 response

with JSON data that indicates the token is invalid

* Requests with an invalid client ID and secret should not be able

to introspect, even if the token itself is valid

Unlike #31561 (which just future-proofed the current behavior against

future changes to `DISABLE_QUERY_AUTH_TOKEN`), this is a potential

compatibility break (some introspection requests without valid client

IDs that would previously succeed will now fail). Affected deployments

must begin sending a valid HTTP basic authentication header with their

introspection requests, with the username set to a valid client ID and

the password set to the corresponding client secret.

When you are entering a number in the issue search, you likely want the

issue with the given ID (code internal concept: issue index).

As such, when a number is detected, the issue with the corresponding ID

will now be added to the results.

Fixes#4479

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

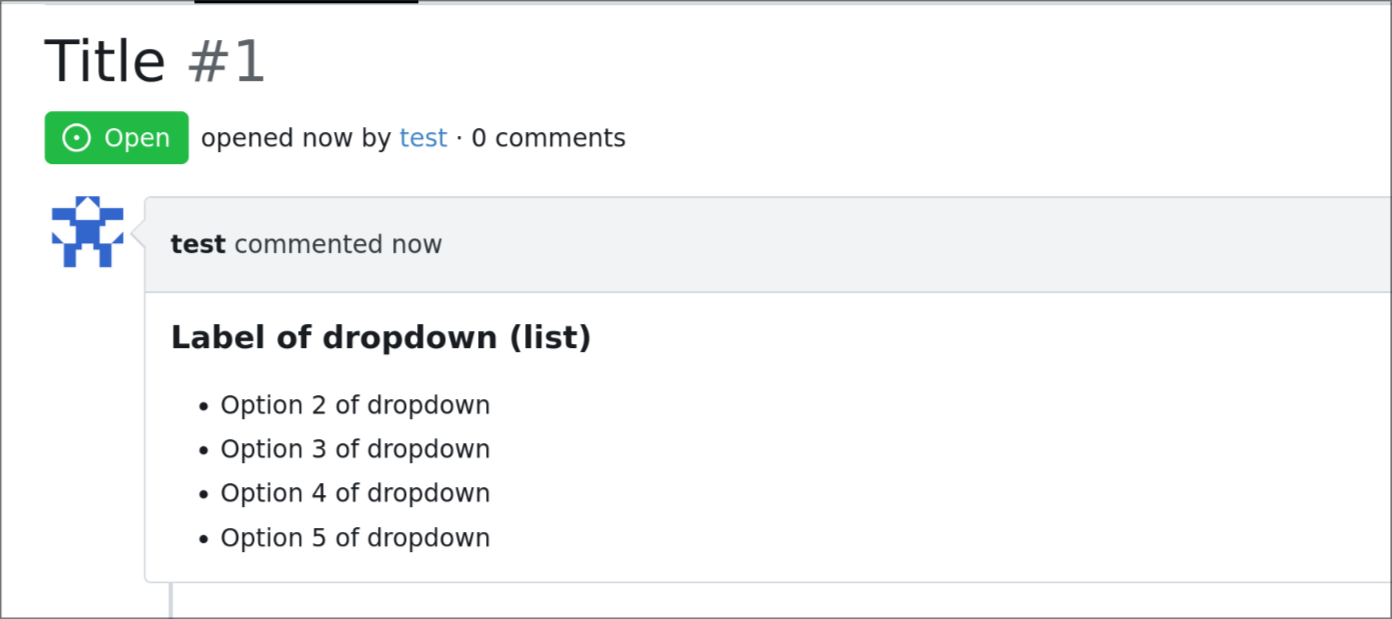

Issue template dropdown can have many entries, and it could be better to

have them rendered as list later on if multi-select is enabled.

so this adds an option to the issue template engine to do so.

DOCS: https://gitea.com/gitea/docs/pulls/19

---

## demo:

```yaml

name: Name

title: Title

about: About

labels: ["label1", "label2"]

ref: Ref

body:

- type: dropdown

id: id6

attributes:

label: Label of dropdown (list)

description: Description of dropdown

multiple: true

list: true

options:

- Option 1 of dropdown

- Option 2 of dropdown

- Option 3 of dropdown

- Option 4 of dropdown

- Option 5 of dropdown

- Option 6 of dropdown

- Option 7 of dropdown

- Option 8 of dropdown

- Option 9 of dropdown

```

---

*Sponsored by Kithara Software GmbH*

We have some instances that only allow using an external authentication

source for authentication. In this case, users changing their email,

password, or linked OpenID connections will not have any effect, and

we'd like to prevent showing that to them to prevent confusion.

Included in this are several changes to support this:

* A new setting to disable user managed authentication credentials

(email, password & OpenID connections)

* A new setting to disable user managed MFA (2FA codes & WebAuthn)

* Fix an issue where some templates had separate logic for determining

if a feature was disabled since it didn't check the globally disabled

features

* Hide more user setting pages in the navbar when their settings aren't

enabled

---------

Co-authored-by: Kyle D <kdumontnu@gmail.com>

Fixes#22722

### Problem

Currently, it is not possible to force push to a branch with branch

protection rules in place. There are often times where this is necessary

(CI workflows/administrative tasks etc).

The current workaround is to rename/remove the branch protection,

perform the force push, and then reinstate the protections.

### Solution

Provide an additional section in the branch protection rules to allow

users to specify which users with push access can also force push to the

branch. The default value of the rule will be set to `Disabled`, and the

UI is intuitive and very similar to the `Push` section.

It is worth noting in this implementation that allowing force push does

not override regular push access, and both will need to be enabled for a

user to force push.

This applies to manual force push to a remote, and also in Gitea UI

updating a PR by rebase (which requires force push)

This modifies the `BranchProtection` API structs to add:

- `enable_force_push bool`

- `enable_force_push_whitelist bool`

- `force_push_whitelist_usernames string[]`

- `force_push_whitelist_teams string[]`

- `force_push_whitelist_deploy_keys bool`

### Updated Branch Protection UI:

<img width="943" alt="image"

src="https://github.com/go-gitea/gitea/assets/79623665/7491899c-d816-45d5-be84-8512abd156bf">

### Pull Request `Update branch by Rebase` option enabled with source

branch `test` being a protected branch:

<img width="1038" alt="image"

src="https://github.com/go-gitea/gitea/assets/79623665/57ead13e-9006-459f-b83c-7079e6f4c654">

---------

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Running git update-index for every individual file is slow, so add and

remove everything with a single git command.

When such a big commit lands in the default branch, it could cause PR

creation and patch checking for all open PRs to be slow, or time out

entirely. For example, a commit that removes 1383 files was measured to

take more than 60 seconds and timed out. With this change checking took

about a second.

This is related to #27967, though this will not help with commits that

change many lines in few files.

Support legacy _links LFS batch response.

Fixes#31512.

This is backwards-compatible change to the LFS client so that, upon

mirroring from an upstream which has a batch api, it can download

objects whether the responses contain the `_links` field or its

successor the `actions` field. When Gitea must fallback to the legacy

`_links` field a logline is emitted at INFO level which looks like this:

```

...s/lfs/http_client.go:188:performOperation() [I] <LFSPointer ee95d0a27ccdfc7c12516d4f80dcf144a5eaf10d0461d282a7206390635cdbee:160> is using a deprecated batch schema response!

```

I've only run `test-backend` with this code, but added a new test to

cover this case. Additionally I have a fork with this change deployed

which I've confirmed syncs LFS from Gitea<-Artifactory (which has legacy

`_links`) as well as from Gitea<-Gitea (which has the modern `actions`).

Signed-off-by: Royce Remer <royceremer@gmail.com>

This change fixes cases when a Wiki page refers to a video stored in the

Wiki repository using relative path. It follows the similar case which

has been already implemented for images.

Test plan:

- Create repository and Wiki page

- Clone the Wiki repository

- Add video to it, say `video.mp4`

- Modify the markdown file to refer to the video using `<video

src="video.mp4">`

- Commit the Wiki page

- Observe that the video is properly displayed

---------

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

This PR modifies the structs for editing and creating org teams to allow

team names to be up to 255 characters. The previous maximum length was

30 characters.

This PR only does "renaming":

* `Route` should be `Router` (and chi router is also called "router")

* `Params` should be `PathParam` (to distingush it from URL query param, and to match `FormString`)

* Use lower case for private functions to avoid exposing or abusing

Parse base path and tree path so that media links can be correctly

created with /media/.

Resolves#31294

---------

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Fix#31361, and add tests

And this PR introduces an undocumented & debug-purpose-only config

option: `USE_SUB_URL_PATH`. It does nothing for end users, it only helps

the development of sub-path related problems.

And also fix#31366

Co-authored-by: @ExplodingDragon

Fix#31327

This is a quick patch to fix the bug.

Some parameters are using 0, some are using -1. I think it needs a

refactor to keep consistent. But that will be another PR.

The PR replaces all `goldmark/util.BytesToReadOnlyString` with

`util.UnsafeBytesToString`, `goldmark/util.StringToReadOnlyBytes` with

`util.UnsafeStringToBytes`. This removes one `TODO`.

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Enable [unparam](https://github.com/mvdan/unparam) linter.

Often I could not tell the intention why param is unused, so I put

`//nolint` for those cases like webhook request creation functions never

using `ctx`.

---------

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: delvh <dev.lh@web.de>

This solution implements a new config variable MAX_ROWS, which

corresponds to the “Maximum allowed rows to render CSV files. (0 for no

limit)” and rewrites the Render function for CSV files in markup module.

Now the render function only reads the file once, having MAX_FILE_SIZE+1

as a reader limit and MAX_ROWS as a row limit. When the file is larger

than MAX_FILE_SIZE or has more rows than MAX_ROWS, it only renders until

the limit, and displays a user-friendly warning informing that the

rendered data is not complete, in the user's language.

---

Previously, when a CSV file was larger than the limit, the render

function lost its function to render the code. There were also multiple

reads to the file, in order to determine its size and render or

pre-render.

The warning: