Fix#28056

This PR will check whether the repo has zero branch when pushing a

branch. If that, it means this repository hasn't been synced.

The reason caused that is after user upgrade from v1.20 -> v1.21, he

just push branches without visit the repository user interface. Because

all repositories routers will check whether a branches sync is necessary

but push has not such check.

For every repository, it has two states, synced or not synced. If there

is zero branch for a repository, then it will be assumed as non-sync

state. Otherwise, it's synced state. So if we think it's synced, we just

need to update branch/insert new branch. Otherwise do a full sync. So

that, for every push, there will be almost no extra load added. It's

high performance than yours.

For the implementation, we in fact will try to update the branch first,

if updated success with affect records > 0, then all are done. Because

that means the branch has been in the database. If no record is

affected, that means the branch does not exist in database. So there are

two possibilities. One is this is a new branch, then we just need to

insert the record. Another is the branches haven't been synced, then we

need to sync all the branches into database.

The function `GetByBean` has an obvious defect that when the fields are

empty values, it will be ignored. Then users will get a wrong result

which is possibly used to make a security problem.

To avoid the possibility, this PR removed function `GetByBean` and all

references.

And some new generic functions have been introduced to be used.

The recommand usage like below.

```go

// if query an object according id

obj, err := db.GetByID[Object](ctx, id)

// query with other conditions

obj, err := db.Get[Object](ctx, builder.Eq{"a": a, "b":b})

```

System users (Ghost, ActionsUser, etc) have a negative id and may be the

author of a comment, either because it was created by a now deleted user

or via an action using a transient token.

The GetPossibleUserByID function has special cases related to system

users and will not fail if given a negative id.

Refs: https://codeberg.org/forgejo/forgejo/issues/1425

(cherry picked from commit 6a2d2fa24390116d31ae2507c0a93d423f690b7b)

Fixes#27819

We have support for two factor logins with the normal web login and with

basic auth. For basic auth the two factor check was implemented at three

different places and you need to know that this check is necessary. This

PR moves the check into the basic auth itself.

Fixes#27598

In #27080, the logic for the tokens endpoints were updated to allow

admins to create and view tokens in other accounts. However, the same

functionality was not added to the DELETE endpoint. This PR makes the

DELETE endpoint function the same as the other token endpoints and adds unit tests

Closes#27455

> The mechanism responsible for long-term authentication (the 'remember

me' cookie) uses a weak construction technique. It will hash the user's

hashed password and the rands value; it will then call the secure cookie

code, which will encrypt the user's name with the computed hash. If one

were able to dump the database, they could extract those two values to

rebuild that cookie and impersonate a user. That vulnerability exists

from the date the dump was obtained until a user changed their password.

>

> To fix this security issue, the cookie could be created and verified

using a different technique such as the one explained at

https://paragonie.com/blog/2015/04/secure-authentication-php-with-long-term-persistence#secure-remember-me-cookies.

The PR removes the now obsolete setting `COOKIE_USERNAME`.

assert.Fail() will continue to execute the code while assert.FailNow()

not. I thought those uses of assert.Fail() should exit immediately.

PS: perhaps it's a good idea to use

[require](https://pkg.go.dev/github.com/stretchr/testify/require)

somewhere because the assert package's default behavior does not exit

when an error occurs, which makes it difficult to find the root error

reason.

- Currently in the cron tasks, the 'Previous Time' only displays the

previous time of when the cron library executes the function, but not

any of the manual executions of the task.

- Store the last run's time in memory in the Task struct and use that,

when that time is later than time that the cron library has executed

this task.

- This ensures that if an instance admin manually starts a task, there's

feedback that this task is/has been run, because the task might be run

that quick, that the status icon already has been changed to an

checkmark,

- Tasks that are executed at startup now reflect this as well, as the

time of the execution of that task on startup is now being shown as

'Previous Time'.

- Added integration tests for the API part, which is easier to test

because querying the HTML table of cron tasks is non-trivial.

- Resolves https://codeberg.org/forgejo/forgejo/issues/949

(cherry picked from commit fd34fdac1408ece6b7d9fe6a76501ed9a45d06fa)

---------

Co-authored-by: Gusted <postmaster@gusted.xyz>

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

Co-authored-by: silverwind <me@silverwind.io>

- MySQL 5.7 support and testing is dropped

- MySQL tests now execute against 8.1, up from 5.7 and 8.0

- PostgreSQL 10 and 11 support ist dropped

- PostgreSQL tests now execute against 16, up from 15

- MSSQL 2008 support is dropped

- MSSQL tests now run against locked 2022 version

Fixes: https://github.com/go-gitea/gitea/issues/25657

Ref: https://endoflife.date/mysql

Ref: https://endoflife.date/postgresql

Ref: https://endoflife.date/mssqlserver

## ⚠️ BREAKING ⚠️

Support for MySQL 5.7, PostgreSQL 10 and 11, and MSSQL 2008 is dropped.

You are encouraged to upgrade to supported versions.

---------

Co-authored-by: techknowlogick <techknowlogick@gitea.com>

Part of #27065

This PR touches functions used in templates. As templates are not static

typed, errors are harder to find, but I hope I catch it all. I think

some tests from other persons do not hurt.

Blank Issues should be enabled if they are not explicit disabled through

the `blank_issues_enabled` field of the Issue Config. The Implementation

has currently a Bug: If you create a Issue Config file with only

`contact_links` and without a `blank_issues_enabled` field,

`blank_issues_enabled` is set to false by default.

The fix is only one line, but I decided to also improve the tests to

make sure there are no other problems with the Implementation.

This is a bugfix, so it should be backported to 1.20.

Part of #27065

This reduces the usage of `db.DefaultContext`. I think I've got enough

files for the first PR. When this is merged, I will continue working on

this.

Considering how many files this PR affect, I hope it won't take to long

to merge, so I don't end up in the merge conflict hell.

---------

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Currently, Artifact does not have an expiration and automatic cleanup

mechanism, and this feature needs to be added. It contains the following

key points:

- [x] add global artifact retention days option in config file. Default

value is 90 days.

- [x] add cron task to clean up expired artifacts. It should run once a

day.

- [x] support custom retention period from `retention-days: 5` in

`upload-artifact@v3`.

- [x] artifacts link in actions view should be non-clickable text when

expired.

They currently throw a Internal Server Error when you use them without a

token. Now they correctly return a `token is required` error.

This is no security issue. If you use this endpoints with a token that

don't have the correct permission, you get the correct error. This is

not affected by this PR.

1. The old `prepareQueryArg` did double-unescaping of form value.

2. By the way, remove the unnecessary `ctx.Flash = ...` in

`MockContext`.

Co-authored-by: Giteabot <teabot@gitea.io>

Just like `models/unittest`, the testing helper functions should be in a

separate package: `contexttest`

And complete the TODO:

> // TODO: move this function to other packages, because it depends on

"models" package

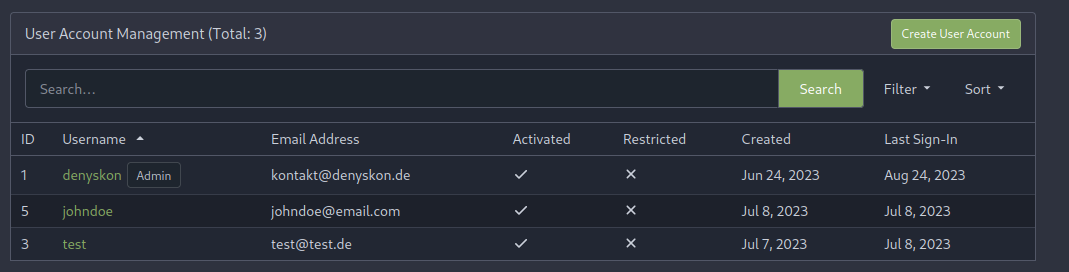

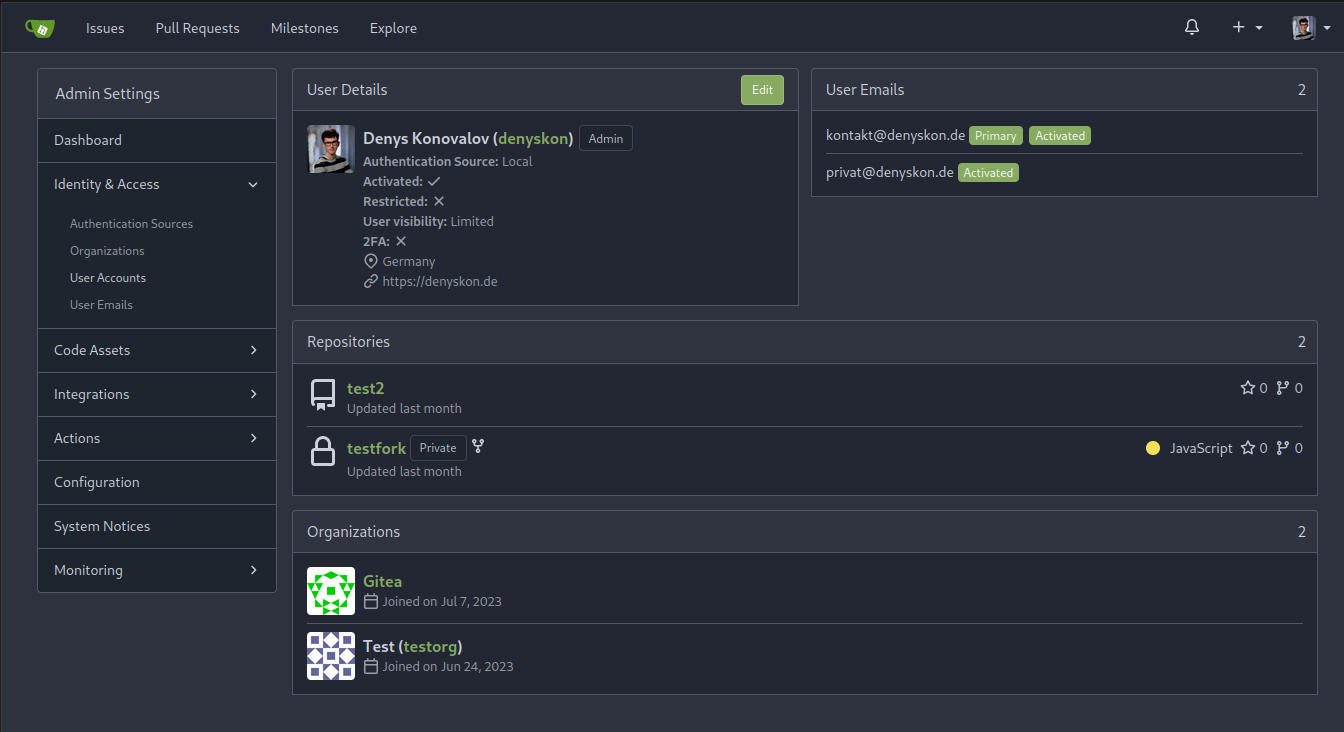

This PR implements a proposal to clean up the admin users table by

moving some information out to a separate user details page (which also

displays some additional information).

Other changes:

- move edit user page from `/admin/users/{id}` to

`/admin/users/{id}/edit` -> `/admin/users/{id}` now shows the user

details page

- show if user is instance administrator as a label instead of a

separate column

- separate explore users template into a page- and a shared one, to make

it possible to use it on the user details page

- fix issue where there was no margin between alert message and

following content on admin pages

<details>

<summary>Screenshots</summary>

</details>

Partially resolves#25939

---------

Co-authored-by: Giteabot <teabot@gitea.io>

- Resolves https://codeberg.org/forgejo/forgejo/issues/580

- Return a `upload_field` to any release API response, which points to

the API URL for uploading new assets.

- Adds unit test.

- Adds integration testing to verify URL is returned correctly and that

upload endpoint actually works

---------

Co-authored-by: Gusted <postmaster@gusted.xyz>

Fixes: #26333.

Previously, this endpoint only updates the `StatusCheckContexts` field

when `EnableStatusCheck==true`, which makes it impossible to clear the

array otherwise.

This patch uses slice `nil`-ness to decide whether to update the list of

checks. The field is ignored when either the client explicitly passes in

a null, or just omits the field from the json ([which causes

`json.Unmarshal` to leave the struct field

unchanged](https://go.dev/play/p/Z2XHOILuB1Q)). I think this is a better

measure of intent than whether the `EnableStatusCheck` flag was set,

because it matches the semantics of other field types.

Also adds a test case. I noticed that [`testAPIEditBranchProtection`

only checks the branch

name](c1c83dbaec/tests/integration/api_branch_test.go (L68))

and no other fields, so I added some extra `GET` calls and specific

checks to make sure the fields are changing properly.

I added those checks the existing integration test; is that the right

place for it?

Fixes#25564Fixes#23191

- Api v2 search endpoint should return only the latest version matching

the query

- Api v3 search endpoint should return `take` packages not package

versions

I kept sending pull requests that consisted of one-line changes. It's

time to

settle this once and for all. (Maybe.)

- Explain Gitea behavior and the consequences of each

setting better, so that the user does not have to consult

the docs.

- Do not use different spellings of identical terms

interchangeably, e.g. `e-mail` and `email`.

- Use more conventional terms to describe the same things,

e.g. `Confirm Password` instead of `Re-Type Password`.

- Introduces additional clarification for Mirror Settings

- Small adjustments in test

- This is a cry for help.

- Grammar and spelling consistencies for en-US locale

(e.g. cancelled -> canceled)

- Introduce tooltip improvements.

---------

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Co-authored-by: Giteabot <teabot@gitea.io>

- The permalink and 'Reference in New issue' URL of an renderable file

(those where you can see the source and a rendered version of it, such

as markdown) doesn't contain `?display=source`. This leads the issue

that the URL doesn't have any effect, as by default the rendered version

is shown and thus not the source.

- Add `?display=source` to the permalink URL and to 'Reference in New

Issue' if it's renderable file.

- Add integration testing.

Refs: https://codeberg.org/forgejo/forgejo/pulls/1088

Co-authored-by: Gusted <postmaster@gusted.xyz>

Co-authored-by: Giteabot <teabot@gitea.io>

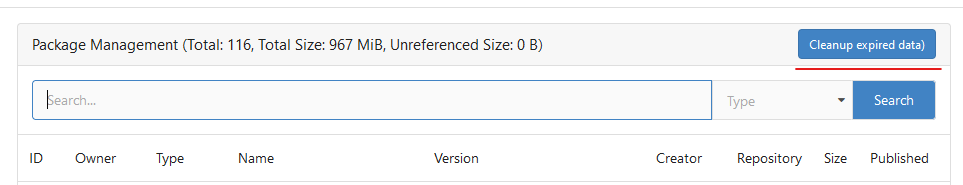

Until now expired package data gets deleted daily by a cronjob. The

admin page shows the size of all packages and the size of unreferenced

data. The users (#25035, #20631) expect the deletion of this data if

they run the cronjob from the admin page but the job only deletes data

older than 24h.

This PR adds a new button which deletes all expired data.

---------

Co-authored-by: silverwind <me@silverwind.io>

Follow #25229

Copy from

https://github.com/go-gitea/gitea/pull/26290#issuecomment-1663135186

The bug is that we cannot get changed files for the

`pull_request_target` event. This event runs in the context of the base

branch, so we won't get any changes if we call

`GetFilesChangedSinceCommit` with `PullRequest.Base.Ref`.

- `setting.UI.Notification.EventSourceUpdateTime` is by default 10

seconds, which adds an 10 second delay before the test succeeds.

- Lower the interval to reduce it to at most 3 second delay (the code

only send events when they are at least 2 seconds old).

(cherry picked from commit 3adb9ae6009ff3ddebaed4875e086343f668ef7b)

Refs: https://codeberg.org/forgejo/forgejo/pulls/1166

Co-authored-by: Gusted <postmaster@gusted.xyz>

Co-authored-by: Giteabot <teabot@gitea.io>

Not too important, but I think that it'd be a pretty neat touch.

Also fixes some layout bugs introduced by a previous PR.

---------

Co-authored-by: Gusted <postmaster@gusted.xyz>

Co-authored-by: Caesar Schinas <caesar@caesarschinas.com>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Fix#24662.

Replace #24822 and #25708 (although it has been merged)

## Background

In the past, Gitea supported issue searching with a keyword and

conditions in a less efficient way. It worked by searching for issues

with the keyword and obtaining limited IDs (as it is heavy to get all)

on the indexer (bleve/elasticsearch/meilisearch), and then querying with

conditions on the database to find a subset of the found IDs. This is

why the results could be incomplete.

To solve this issue, we need to store all fields that could be used as

conditions in the indexer and support both keyword and additional

conditions when searching with the indexer.

## Major changes

- Redefine `IndexerData` to include all fields that could be used as

filter conditions.

- Refactor `Search(ctx context.Context, kw string, repoIDs []int64,

limit, start int, state string)` to `Search(ctx context.Context, options

*SearchOptions)`, so it supports more conditions now.

- Change the data type stored in `issueIndexerQueue`. Use

`IndexerMetadata` instead of `IndexerData` in case the data has been

updated while it is in the queue. This also reduces the storage size of

the queue.

- Enhance searching with Bleve/Elasticsearch/Meilisearch, make them

fully support `SearchOptions`. Also, update the data versions.

- Keep most logic of database indexer, but remove

`issues.SearchIssueIDsByKeyword` in `models` to avoid confusion where is

the entry point to search issues.

- Start a Meilisearch instance to test it in unit tests.

- Add unit tests with almost full coverage to test

Bleve/Elasticsearch/Meilisearch indexer.

---------

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

The API should only return the real Mail of a User, if the caller is

logged in. The check do to this don't work. This PR fixes this. This not

really a security issue, but can lead to Spam.

---------

Co-authored-by: silverwind <me@silverwind.io>

Fix#25934

Add `ignoreGlobal` parameter to `reqUnitAccess` and only check global

disabled units when `ignoreGlobal` is true. So the org-level projects

and user-level projects won't be affected by global disabled

`repo.projects` unit.

The setting `MAILER_TYPE` is deprecated.

According to the config cheat sheet, it should be `PROTOCOL`.

---------

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

The version listed in rpm repodata should only contain the rpm version

(1.0.0) and not the combination of version and release (1.0.0-2). We

correct this behaviour in primary.xml.gz, filelists.xml.gz and

others.xml.gz.

Signed-off-by: Peter Verraedt <peter@verraedt.be>

Replace #25892

Close #21942

Close #25464

Major changes:

1. Serve "robots.txt" and ".well-known/security.txt" in the "public"

custom path

* All files in "public/.well-known" can be served, just like

"public/assets"

3. Add a test for ".well-known/security.txt"

4. Simplify the "FileHandlerFunc" logic, now the paths are consistent so

the code can be simpler

5. Add CORS header for ".well-known" endpoints

6. Add logs to tell users they should move some of their legacy custom

public files

```

2023/07/19 13:00:37 cmd/web.go:178:serveInstalled() [E] Found legacy public asset "img" in CustomPath. Please move it to /work/gitea/custom/public/assets/img

2023/07/19 13:00:37 cmd/web.go:182:serveInstalled() [E] Found legacy public asset "robots.txt" in CustomPath. Please move it to /work/gitea/custom/public/robots.txt

```

This PR is not breaking.

---------

Co-authored-by: silverwind <me@silverwind.io>

Co-authored-by: Giteabot <teabot@gitea.io>

Replace #10912

And there are many new tests to cover the CLI behavior

There were some concerns about the "option order in hook scripts"

(https://github.com/go-gitea/gitea/pull/10912#issuecomment-1137543314),

it's not a problem now. Because the hook script uses `/gitea hook

--config=/app.ini pre-receive` format. The "config" is a global option,

it can appear anywhere.

----

## ⚠️ BREAKING ⚠️

This PR does it best to avoid breaking anything. The major changes are:

* `gitea` itself won't accept web's options: `--install-port` / `--pid`

/ `--port` / `--quiet` / `--verbose` .... They are `web` sub-command's

options.

* Use `./gitea web --pid ....` instead

* `./gitea` can still run the `web` sub-command as shorthand, with

default options

* The sub-command's options must follow the sub-command

* Before: `./gitea --sub-opt subcmd` might equal to `./gitea subcmd

--sub-opt` (well, might not ...)

* After: only `./gitea subcmd --sub-opt` could be used

* The global options like `--config` are not affected

Fix#25776. Close#25826.

In the discussion of #25776, @wolfogre's suggestion was to remove the

commit status of `running` and `warning` to keep it consistent with

github.

references:

-

https://docs.github.com/en/rest/commits/statuses?apiVersion=2022-11-28#about-commit-statuses

## ⚠️ BREAKING ⚠️

So the commit status of Gitea will be consistent with GitHub, only

`pending`, `success`, `error` and `failure`, while `warning` and

`running` are not supported anymore.

---------

Co-authored-by: Jason Song <i@wolfogre.com>

current actions artifacts implementation only support single file

artifact. To support multiple files uploading, it needs:

- save each file to each db record with same run-id, same artifact-name

and proper artifact-path

- need change artifact uploading url without artifact-id, multiple files

creates multiple artifact-ids

- support `path` in download-artifact action. artifact should download

to `{path}/{artifact-path}`.

- in repo action view, it provides zip download link in artifacts list

in summary page, no matter this artifact contains single or multiple

files.

Before: the concept "Content string" is used everywhere. It has some

problems:

1. Sometimes it means "base64 encoded content", sometimes it means "raw

binary content"

2. It doesn't work with large files, eg: uploading a 1G LFS file would

make Gitea process OOM

This PR does the refactoring: use "ContentReader" / "ContentBase64"

instead of "Content"

This PR is not breaking because the key in API JSON is still "content":

`` ContentBase64 string `json:"content"` ``

we refactored `userIDFromToken` for the token parsing part into a new

function `parseToken`. `parseToken` returns the string `token` from

request, and a boolean `ok` representing whether the token exists or

not. So we can distinguish between token non-existence and token

inconsistency in the `verfity` function, thus solving the problem of no

proper error message when the token is inconsistent.

close#24439

related #22119

---------

Co-authored-by: Jason Song <i@wolfogre.com>

Co-authored-by: Giteabot <teabot@gitea.io>

Fixes (?) #25538

Fixes https://codeberg.org/forgejo/forgejo/issues/972

Regression #23879#23879 introduced a change which prevents read access to packages if a

user is not a member of an organization.

That PR also contained a change which disallows package access if the

team unit is configured with "no access" for packages. I don't think

this change makes sense (at the moment). It may be relevant for private

orgs. But for public or limited orgs that's useless because an

unauthorized user would have more access rights than the team member.

This PR restores the old behaviour "If a user has read access for an

owner, they can read packages".

---------

Co-authored-by: Giteabot <teabot@gitea.io>

related #16865

This PR adds an accessibility check before mounting container blobs.

---------

Co-authored-by: techknowlogick <techknowlogick@gitea.io>

Co-authored-by: silverwind <me@silverwind.io>

This PR will display a pull request creation hint on the repository home

page when there are newly created branches with no pull request. Only

the recent 6 hours and 2 updated branches will be displayed.

Inspired by #14003

Replace #14003Resolves#311Resolves#13196Resolves#23743

co-authored by @kolaente

Follow #25229

At present, when the trigger event is `pull_request_target`, the `ref`

and `sha` of `ActionRun` are set according to the base branch of the

pull request. This makes it impossible for us to find the head branch of

the `ActionRun` directly. In this PR, the `ref` and `sha` will always be

set to the head branch and they will be changed to the base branch when

generating the task context.

Fixes#24723

Direct serving of content aka HTTP redirect is not mentioned in any of

the package registry specs but lots of official registries do that so it

should be supported by the usual clients.

Fix#25558

Extract from #22743

This PR added a repository's check when creating/deleting branches via

API. Mirror repository and archive repository cannot do that.

This adds an API for uploading and Deleting Avatars for of Users, Repos

and Organisations. I'm not sure, if this should also be added to the

Admin API.

Resolves#25344

---------

Co-authored-by: silverwind <me@silverwind.io>

Co-authored-by: Giteabot <teabot@gitea.io>

Related #14180

Related #25233

Related #22639Close#19786

Related #12763

This PR will change all the branches retrieve method from reading git

data to read database to reduce git read operations.

- [x] Sync git branches information into database when push git data

- [x] Create a new table `Branch`, merge some columns of `DeletedBranch`

into `Branch` table and drop the table `DeletedBranch`.

- [x] Read `Branch` table when visit `code` -> `branch` page

- [x] Read `Branch` table when list branch names in `code` page dropdown

- [x] Read `Branch` table when list git ref compare page

- [x] Provide a button in admin page to manually sync all branches.

- [x] Sync branches if repository is not empty but database branches are

empty when visiting pages with branches list

- [x] Use `commit_time desc` as the default FindBranch order by to keep

consistent as before and deleted branches will be always at the end.

---------

Co-authored-by: Jason Song <i@wolfogre.com>

Fix#25088

This PR adds the support for

[`pull_request_target`](https://docs.github.com/en/actions/using-workflows/events-that-trigger-workflows#pull_request_target)

workflow trigger. `pull_request_target` is similar to `pull_request`,

but the workflow triggered by the `pull_request_target` event runs in

the context of the base branch of the pull request rather than the head

branch. Since the workflow from the base is considered trusted, it can

access the secrets and doesn't need approvals to run.

# The problem

There were many "path tricks":

* By default, Gitea uses its program directory as its work path

* Gitea tries to use the "work path" to guess its "custom path" and

"custom conf (app.ini)"

* Users might want to use other directories as work path

* The non-default work path should be passed to Gitea by GITEA_WORK_DIR

or "--work-path"

* But some Gitea processes are started without these values

* The "serv" process started by OpenSSH server

* The CLI sub-commands started by site admin

* The paths are guessed by SetCustomPathAndConf again and again

* The default values of "work path / custom path / custom conf" can be

changed when compiling

# The solution

* Use `InitWorkPathAndCommonConfig` to handle these path tricks, and use

test code to cover its behaviors.

* When Gitea's web server runs, write the WORK_PATH to "app.ini", this

value must be the most correct one, because if this value is not right,

users would find that the web UI doesn't work and then they should be

able to fix it.

* Then all other sub-commands can use the WORK_PATH in app.ini to

initialize their paths.

* By the way, when Gitea starts for git protocol, it shouldn't output

any log, otherwise the git protocol gets broken and client blocks

forever.

The "work path" priority is: WORK_PATH in app.ini > cmd arg --work-path

> env var GITEA_WORK_DIR > builtin default

The "app.ini" searching order is: cmd arg --config > cmd arg "work path

/ custom path" > env var "work path / custom path" > builtin default

## ⚠️ BREAKING

If your instance's "work path / custom path / custom conf" doesn't meet

the requirements (eg: work path must be absolute), Gitea will report a

fatal error and exit. You need to set these values according to the

error log.

----

Close#24818Close#24222Close#21606Close#21498Close#25107Close#24981

Maybe close#24503

Replace #23301

Replace #22754

And maybe more

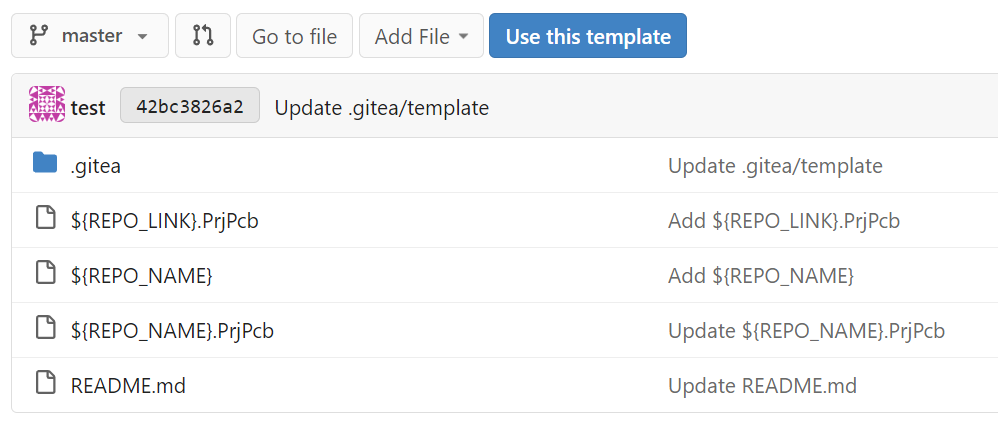

### Summary

Extend the template variable substitution to replace file paths. This

can be helpful for setting up log files & directories that should match

the repository name.

### PR Changes

- Move files matching glob pattern when setting up repos from template

- For security, added ~escaping~ sanitization for cross-platform support

and to prevent directory traversal (thanks @silverwind for the

reference)

- Added unit testing for escaping function

- Fixed the integration tests for repo template generation by passing

the repo_template_id

- Updated the integration testfiles to add some variable substitution &

assert the outputs

I had to fix the existing repo template integration test and extend it

to add a check for variable substitutions.

Example:

Fix#21072

Username Attribute is not a required item when creating an

authentication source. If Username Attribute is empty, the username

value of LDAP user cannot be read, so all users from LDAP will be marked

as inactive by mistake when synchronizing external users.

This PR improves the sync logic, if username is empty, the email address

will be used to find user.